Introduction to kubernetes - ReplicaSets

DevRel at StackGen | Formerly at Deepfence ,Tenable , Accurics | AWS Community Builder also Docker Community Award Winner at Dockercon2020 | CyberSecurity Innovator of Year 2023 award by Bsides Bangalore | Docker/HashiCorp Meetup Organiser Bangalore & Co-Author of Learn Lightweight Kubernetes with k3s (2019) , Packt Publication & also run Non Profit CloudNativeFolks / CloudSecCorner Community To Empower Free Education reach out me twitterhttps://twitter.com/sangamtwts or just follow on GitHub -> https://github.com/sangam14 for Valuable Resources

why we need ReplicaSets ?

Most applications should be scalable and all must be fault tolerant. Pods do not provide those features, ReplicaSets do.

its actually ensure that a specific number of replicas of pod matches the actual state all the time that means replicasets make pods scalable

if your familiar with Replication Controller , we can say ReplicaSet is extended version of that controller only significant difference is that ReplicaSet has extended support for Selectors everything is same but Replication Controller is deprecated

When to use a ReplicaSets ?

A ReplicaSet ensures that a specified number of pod replicas are running at any given time. However, a Deployment is a higher-level concept that manages ReplicaSets and provides declarative updates to Pods along with a lot of other useful features. Therefore, we recommend using Deployments instead of directly using ReplicaSets, unless you require custom update orchestration or don't require updates at all.

This actually means that you may never need to manipulate ReplicaSet objects: use a Deployment instead, and define your application in the spec section.

lets define replicasets

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: my-replicaset

spec:

replicas: 2

selector:

matchLabels:

app: my-app

template:

metadata:

labels:

app: my-app

spec:

containers:

- name: my-container

image: nginx

create replicasets

kubectl create -f replicaset-app.yaml

replicaset.apps/my-replicaset created

check replicaset status

$kubectl get replicaset my-replicaset

NAME DESIRED CURRENT READY AGE

my-replicaset 2 2 2 17s

Name: ## This is the name of the ReplicaSet declared as the child of the metadata property

Desired: ## This is the replica number specified in the YAML manifest file

Current: ## This equates to the current state of the ReplicaSet; that two replicas are up

Ready: ## The two replicas specified are ready and in the running state

Age: ## How long the replicas have been in the running state

scale replicasets value to 5 and get pod status

$ kubectl get Pods

NAME READY STATUS RESTARTS AGE

my-replicaset-bq9wz 1/1 Running 0 15m

my-replicaset-c5h4x 1/1 Running 0 3m43s

my-replicaset-hj59z 1/1 Running 0 3m43s

my-replicaset-hpvxr 1/1 Running 0 15m

my-replicaset-xgwfx 1/1 Running 0 3m43s

change replica value to 1 and re-apply

$ kubectl replace -f replicaset-app.yaml

replicaset.apps/my-replicaset replaced

check replicaset status again

kubectl get replicaset my-replicaset

NAME DESIRED CURRENT READY AGE

my-replicaset 1 1 1 4m9s

scale down and scale up

$kubectl scale - -replicas=5 -f replicaset-app.yaml ## Scale up

$kubectl scale - -replicas=1 -f replicaset-app.yaml ## Scale down

or

$kubectl scale - -replicas= 5 replicaset <replicaset name> ## Scale up

$kubectl scale - -replicas= 1 replicaset <replicaset name> ## Scale down

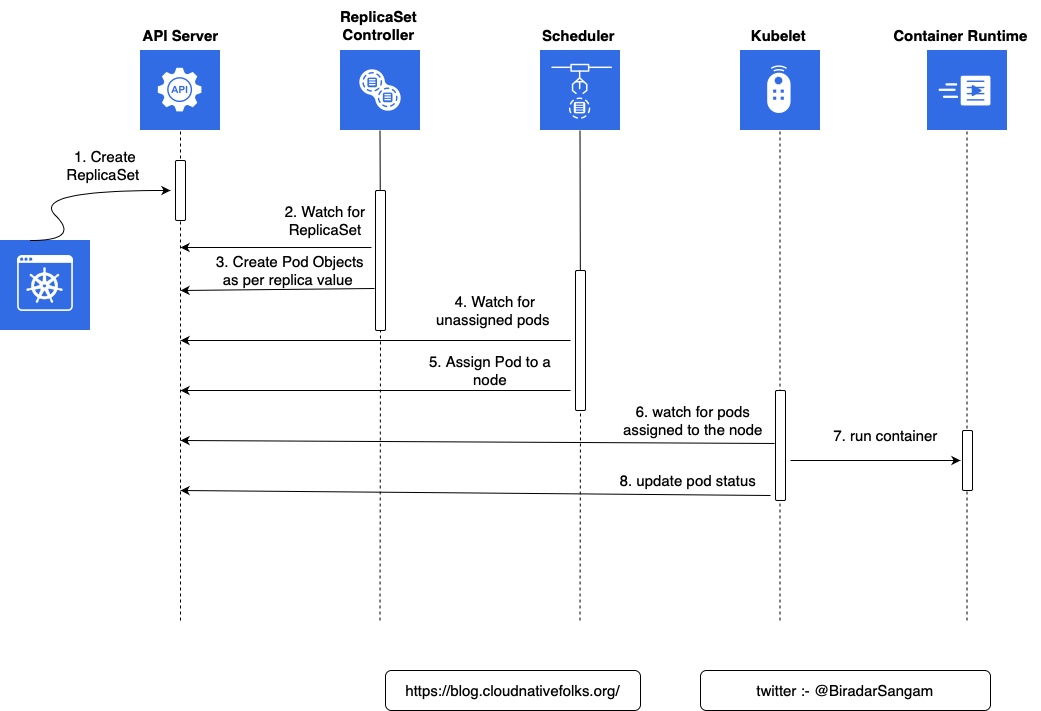

Sequential breakdown of the process

The sequence of events that transpired with the kubectl create -f replicaset-app.yaml command is as follows.

Kubernetes client (kubectl) sent a request to the API server requesting the creation of a ReplicaSet defined in the

replicaset-app.yamlfile.The controller is watching the API server for new events, and it detected that there is a new ReplicaSet object.

The controller creates two new pod definitions because we have configured replica value as 2 in

replicaset-app.yamlfile.

4 . Since the scheduler is watching the API server for new events, it detected that there are two unassigned Pods.

The scheduler decided which node to assign the Pod and sent that information to the API server.

Kubelet is also watching the API server. It detected that the 2 Pods were assigned to the node it is running on.

Kubelet sent requests to container runtime requesting the creation of the containers that form the Pod. In our case, the Pod defines one containers based on

ngnixSo in total2containers are created.Finally, Kubelet sent a request to the API server notifying it that the Pods were created successfully.

delete ReplicaSets

ReplicaSets and pod loosely coupled objects with matching labels we can remove one without deleting other

kubectl delete -f replicaset-app.yaml --cascade=orphan

if we used --cascade=orphan argument to prevent Kubernetes from all the downstream objects

confirm that it is removed from system

kubectl get rs

check if replicaset is running

kubectl get pods